Who Wrote This?

May 6, 2026

Transparency is not a rule to be followed. It is a judgment to be exercised about what the reader is entitled to know, and what you are asking them to trust.

AI use is becoming common across many forms of writing. The range runs from Grammarly quietly fixing a comma to Claude or ChatGPT generating entire arguments from a three-word prompt, and somewhere in between sits a vast grey territory of restructured drafts, AI-sharpened reasoning, and machine-assisted prose that its authors still call their own. The debate about whether all of this requires disclosure has grown louder as these tools have grown more capable, and it tends to produce one of two unsatisfying positions: either all AI use must be declared, or it is nobody's business. Both positions are wrong. Neither thinks carefully about what transparency is actually for.

Before making that argument, it seems only honest to disclose my use of AI for this essay. I have written with AI assistance, specifically Claude, which I used to pressure-test my reasoning, challenge the logic, and probe the weaker edges of what I wanted to say. The arguments here are mine. The thinking was shaped in conversation with a machine, and with my colleagues who have engaged with this piece and provided feedback throughout the writing process. I developed three questions that could guide the disclosure of AI use in writing, and I applied them to test the implications of my AI usage on disclosure standards. The result is more complicated than a simple yes or no. The point of this essay is to tease out that complexity.

The disclosure principle

It would be noise to disclose every instance of AI involvement in a piece of writing in cases where a spell-checker touches your text or an autocomplete finishes your sentence. Demanding disclosure of these interactions is not transparency — it is a category error that drowns the signal in irrelevant information. Some threshold of AI contribution must exist before disclosure becomes meaningful.

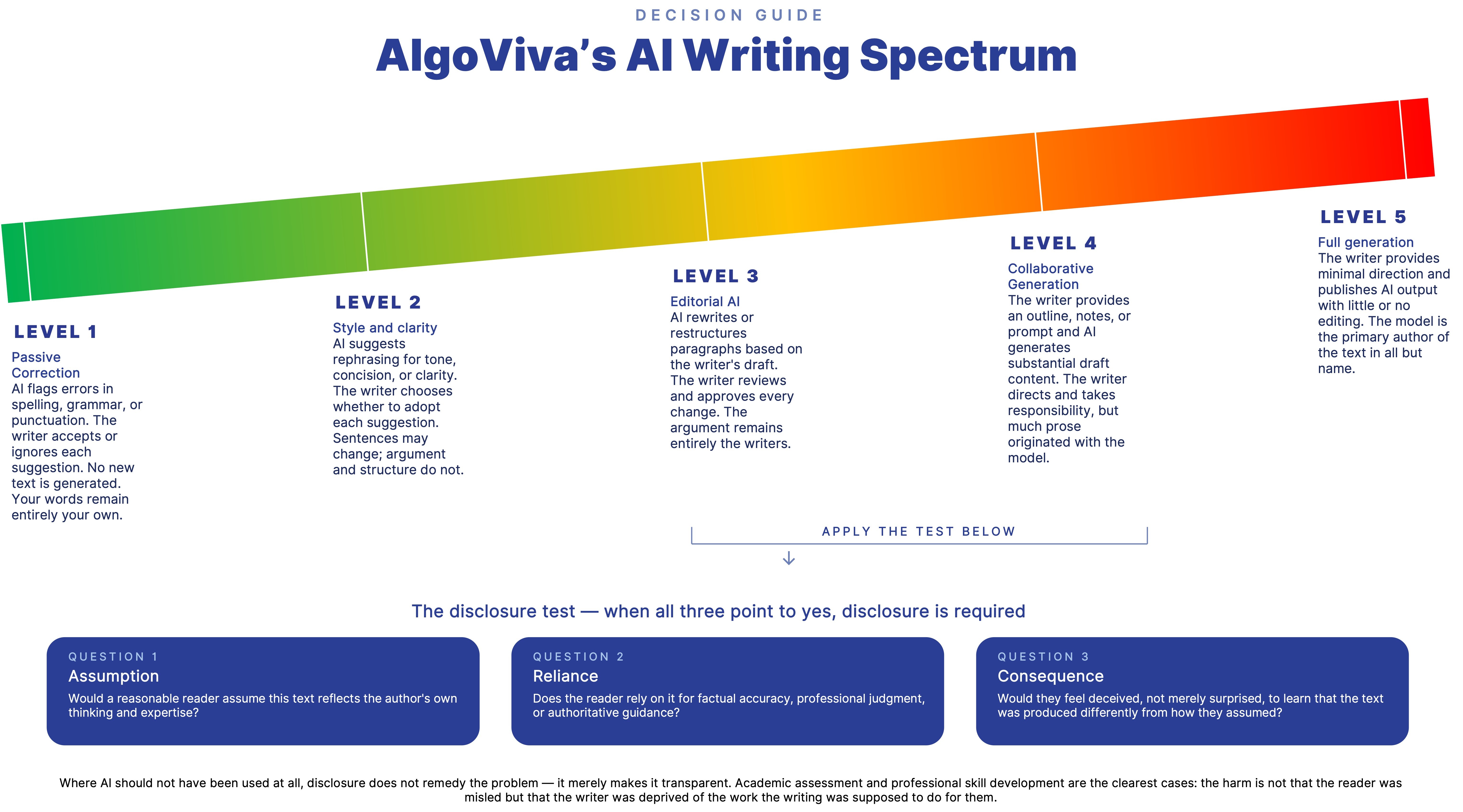

The materiality argument provides one such threshold. On this view, disclosure is triggered when AI's contribution crosses from incidental to significant — when it does enough work that a reasonable person would say the text is partly the machine's rather than wholly the writer's. This is a practical and intuitive standard, and I do not abandon it entirely. The spectrum in the decision guide accompanying this essay is built on something like it: Levels 1 and 2 describe contributions so incidental that disclosure would be absurd; Level 5 describes a contribution so dominant that disclosure is non-negotiable. Materiality of contribution is a useful anchor.

But it is only an anchor, not the full argument, for two reasons.

The first is that the materiality argument cannot account for who is writing. A doctor who uses AI substantially to draft a clinical newsletter has a different disclosure obligation than a student who uses AI at the same level and submits the result as independent medical analysis. The volume of AI contribution is identical in both cases. What differs is what each writer's readers are entitled to assume and what they are relying on. The materiality argument cannot make this distinction.

The second is that the materiality argument mistakes the measure for the thing being measured. It tells you how much AI contributed, but not why that matters. Consider what happened when readers discovered that a popular author had used a ghostwriter for a novel they believed was her own voice: the backlash was not about the ghostwriter's word count. The volume of outside contribution had not changed between the moment of discovery and the moment before it. What had changed was the readers' understanding of what they believed they shared with the author — that the intimacy and authenticity they had connected with was genuinely hers. The materiality of the contribution was real, but it was not the source of the betrayal. The breach of that understanding was.

This is what the materiality argument cannot capture, and what a disclosure principle must be built around. Disclosure exists to protect the reader's ability to calibrate trust. The materiality argument is a useful heuristic for identifying when that protection is likely to be needed, but it does not explain why the protection exists in the first place.

What should govern the decision to disclose is not which tool was used or how much it contributed. It is the gap between the nature and degree of human involvement the reader assumes stands behind the text and what actually occurred. A patient reading a doctor's newsletter expects a human voice shaped by clinical expertise. A voter reading an opinion piece expects genuine reasoning from a person with a real stake in the argument. A professor reviewing a student's essay expects that student's own intellectual work. In each case, discovering AI involvement would not merely surprise the reader; it would feel like a breach of the implicit contract between writer and reader. That breach is the disclosure trigger. The disclosure obligation should not simply track intensity of use. It should track the gap between the human involvement the reader is entitled to assume and the degree to which that assumption holds.

That gap, in practice, surfaces through three questions.

Would a reasonable reader assume this text reflects the author's own thinking and expertise?

Does the reader rely on it for factual accuracy, professional judgment, or authoritative guidance?

And would they feel deceived, not merely surprised, to learn that the text was produced differently from how they assumed?

The third question is the moral hinge of the test. If a reader would feel deceived rather than merely surprised, it is because learning the truth would have changed how they engaged with the text, which means the withholding of it was consequential. Where all three answers converge, disclosure is not optional. Where they diverge, the obligation weakens, and the cases where they diverge are far more numerous than the blanket-disclosure camp tends to acknowledge.

Applying the test

Now let us apply that test honestly to this essay. The first question asks whether a reasonable reader would assume this text reflects the author's own thinking and expertise. The answer is yes — and it is the most interesting answer of the three, because it is partly true. The argument is genuinely mine, developed and owned through the process of writing. Where AI contributed most substantially is the prose — the sharpening, the structuring, the expression. A reader assumes both the thinking and the voice are mine. It is on the voice side that the assumption diverges most from what actually happened. This is also where the materiality argument runs into its limit: it would register the AI's contribution to the prose as significant and trigger disclosure on that basis, without asking the more precise question of what exactly the reader is relying on and where the assumption actually breaks down.

The second question changes the picture further. I co-founded AlgoViva, an AI governance and assurance company, and I hold a PhD in the ethics of AI. A reader encountering this essay will not read it as general commentary. They will grant it credibility appropriate to someone who works in AI governance professionally and can be expected to know what they are talking about. That credibility is a form of authority, and authority creates reliance. Whether or not the reader articulates it this way, they are trusting not just the argument but the standing of the person making it — which means the provenance of both the argument and the prose becomes information a reader exercising their own judgment would reasonably want.

The third question, of whether they would feel deceived rather than merely surprised, is harder to answer with certainty. But the combination of professional credibility and subject matter expertise pushes the test toward disclosure rather than away from it.

I disclosed, then, not because my own test produces an unambiguous mandate; it is genuinely closer to the grey zone than the clear end. I disclosed because arguing for contextual transparency while concealing my own AI use in an essay about AI transparency would be an intellectual contradiction I could not sustain. The disclosure I am making is a choice, not a mechanical obligation. That is exactly what the principle requires. Transparency is not a rule to be followed. It is a judgment to be exercised about what the reader is entitled to know, and what you are asking them to trust.

When disclosure is not enough

There is, however, a category of situations where the entire framework built so far encounters a problem it was not designed to solve. The framework assumes that the primary ethical obligation runs from writer to reader. Disclose honestly, and the reader can calibrate their trust. The asymmetry of information is corrected. The obligation is discharged.

But there are contexts where this is the wrong description of what is at stake. In certain disciplines, the act of writing is not the delivery mechanism for a finished thought. It is the site where thinking happens. A philosophy student, for example, writing an essay on the problem of free will is not primarily producing a text for a reader to evaluate. They are undergoing a process: sitting with difficulty, finding that an argument does not hold, revising under the pressure of a counter-example, discovering through articulation what they actually believe. The essay is constitutive of that process, not merely evidence of it. When AI performs that process on the student's behalf, disclosure to a reader does not restore what was lost. The student can be perfectly transparent about their AI use and still have bypassed the cognitive work the essay was designed to produce in them. The philosophy department that bans AI-generated essays is not making a claim about disclosure. It is making a claim about what philosophy education is for.

This concern is not confined to universities. The journalist who uses AI to draft their analysis may have short-circuited the very process of reporting that would have led them to the insight. The researcher who outsources the writing of their argument may find, when challenged, that they cannot retrace the path that produced it, because they never walked it. These are losses no disclosure statement can recover.

The decision guide accompanying this essay is explicit about its own scope: it governs disclosure obligations in public and professional writing, where the reader is the primary stakeholder. It does not resolve the prior question that arises in pedagogical and formative contexts — whether AI should be used at all, regardless of how transparently. In those contexts, disclosure is necessary but not sufficient. Knowing the limits of a framework is part of using it honestly.

Disclosure norms for AI writing are not yet settled, and they should not be written in stone while the technology continues to shift beneath them. What they need is a durable principle: transparency is owed not to AI, but to the people who read what it helps produce. The obligation to disclose is triggered not by which tool was used or how much text it generated, but by the gap between the human involvement the reader assumes stands behind the text and what actually occurred. Where writing is itself the work and the development of the writer is the point, the disclosure question gives way to a harder one: not what to tell the reader, but whether to use the tool at all. The line is not always obvious. But knowing which question you are actually facing is the beginning of answering it honestly.